ETL stands for “Extract Transform Load”

ETL is a way of getting data. Then, cleaning, massaging, fixing and sometimes cajoling data to get it the way you want it.

So what’s so important about streaming ETL? It is the key to getting timely company business intelligence.

ETL systems have been around for decades. The first major commercial ETL system, Informatica, launched in 1993! ETL solves a very important data issue. That is, the very second you begin to move data, it’s necessary to change, clean and generally transform data. For example, if you are moving customer data from one system to another, it is virtually guaranteed the two systems will each use unique nomenclature to describe customer data. So now, when we move customer data it has to go through a “conversion job.”

Informatica broke on the scene 30 years ago to replace what was really a lot of custom code. The essential process was 1.) Get a block of source system data, 2.) Run a series of “jobs” to transform that data block, 3) Output a transformed batch of transformed data. Viola. ETL was born.

Streaming ETL History

So what has happened in ETL over the last 30 years? Oh boy, plenty! The downside to batch ETL is just that. The batch. In pre-distributed load, limited processing and memory power these ETL jobs could take quite a long time to run. The default for most companies is once per day. As code based systems there is also a high risk of erroring out. For example, let’s say that there are 10 transformation jobs. What happens if job 5 fails? Ouch. Fix it and start the process again. The net result is that you get daily data at best. If there is an error in the process that is going to take a developer to fix and re-run.

Streaming data is different

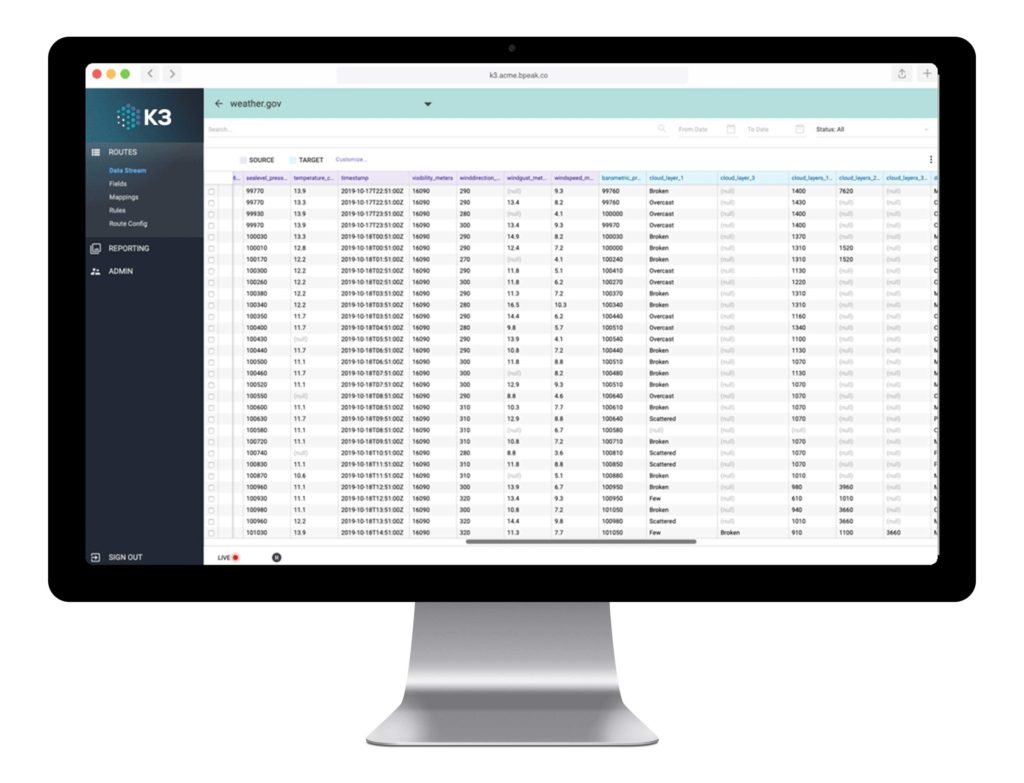

Modern data architectures allow for resilient streaming data. What is streaming ETL? Immediately upon ingestion, data is instantly transformed according to the ETL logic. This is somewhat a miracle of modern processing power. Even very large data sets can be distributed over many servers. The result is that data from all over the enterprise can be saved to provide real time visibility into operations.

Streaming really means streaming and batch

The other benefit is that streaming data can always be delivered as real time streaming or as a batch. For example, let’s say you have real time customer information coming in that is used by your salesforce. However, you want some information to go to Salesforces’ real time API, and other information bulk load API. No problem. Streaming can always ‘gather up’ up data and send it into the bulk load API. Batch can’t do this. Its nature is always batch.

Steaming ETL Today

All those years but ETL has really come a long way. The modernization of the technology stack over the primitive Informatica days is really staggering. Not only has it spawned the entire concept of streaming ETL, it has also driven a number of other key advances in data management including:

- The combination of ETL functionality with Integration functionality

- The advent of low code ETL and no code ETL

- The advent of low code integration

- The advent of low code data connections for files, databases, and APIs

What is the result of all of these features? Real time business insight that does not take an army of people to deliver. This is a major step change for technology and business operations.

There are a lot of data ETL and integration platforms on the market. If you’d like to see real advances in low code streaming ETL just click the link below and we’d be happy to get you a demo.